From 'turbot ax' to GPT: Hype and reality

Pattern matching AI and its effect on jobs (as of March 2024)

As with all things AI, I’m prefacing my thoughts by mentioning the exact time I’m writing them - who’s to say someone won’t drop another banger model tomorrow that changes everything (if some annoying Twitter/X hype accounts are to be believed, this happens every week).

I came across this interesting analysis of the kind of jobs being replaced by AI using the freelance job trends as the data source.

Why analyze freelance jobs instead of actual jobs from large companies like Microsoft and Amazon? One, if there’s any going to be any impact to certain jobs, we’ll probably see it first in the freelance market because large companies will be much slower in adopting AI tools. Second, it’s clear from the latest Upwork earnings report that tech layoffs aren’t affecting freelance markets like Upwork as much.

I felt the idea and methodology was quite reasonable and logical and the results were as I expected - LLMs will first replace text related low-level jobs (writing, customer support tickets, translation and so on).

About two weeks later, we have this, right on schedule to confirm my biases -

Now, take this with a sack of salt - Klarna is looking to IPO this year; they want to look profitable and ride the AI trend.

took a closer look and found it underwhelming.No one reads the docs. These assistants turn docs into chat text, that people read!

Well maybe 2/3 of the people that uses it don’t actually need real support, they just need stuff that already exists in the docs

I think this story is potentially more a story about the simplicity/stupidity of the average support request rather than the impressiveness of AI agents.

I think that’s all there is to it.

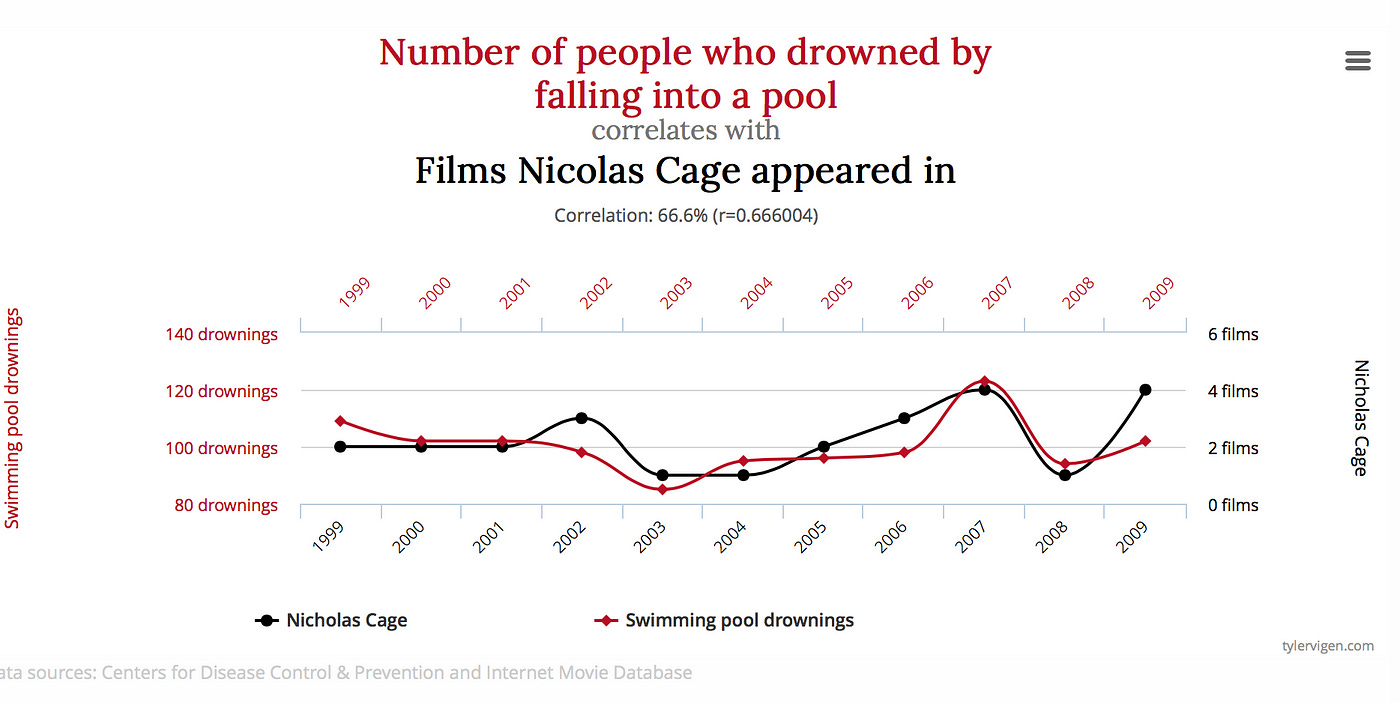

We are many years away from replacing high level jobs - as renowned roboticist and AI researcher Rodney Brooks explains, the current models fundamentally don’t have an understanding of concepts; it's just statistical correlation. LLMs are correlations between words, vision models are correlations between pixels.

Geoffrey Hinton, one of the pioneers of AI and a former scientist at Google Brain, infamously said in 2016:

“I think if you work as a radiologist, you are like the coyote that’s already over the edge of the cliff but hasn’t yet looked down,” Hinton said. “People should stop training radiologists now. It’s just completely obvious within five years deep learning is going to do better than radiologists …. It might be 10 years, but we’ve got plenty of radiologists already.”

Certain deep learning models did get better than radiologists, but those are in extremely specific and limited scenarios; they are nowhere close to replacing anything (source: am a radiologist). On the contrary, we are facing a global radiologist shortage. At the moment, these models are like the red squiggly lines highlighting grammatical errors - pointing us to where the potential mistakes are and letting us take a call. Is that a pathological lesion, a normal anatomical variation or an artifact?

Tangential fun fact, fixing this issue in searches is what lead Google to its current ad centric business model.

Google kept making embarrassing mistakes, such as telling users who’d typed “TurboTax” that they probably meant “turbot ax.” (A turbot is a flatfish that lives in the North Atlantic.) A spell-checker is only as good as its dictionary, and Shazeer realized that, in the Web, Google had access to the biggest dictionary there had ever been. He wrote a program that used the statistical properties of text on the Web to determine which words were likely misspellings. The software learned that “pritany spears” and “brinsley spears” both meant “Britney Spears”. In collaboration with Jeff and an engineer named Georges Harik, Shazeer applied similar techniques to associate ads with Web pages.

The AI doesn't truly understand language or have common sense knowledge - it's just very sophisticated pattern matching. Machine learning is often described as a "black box" because the inner workings of the models can be difficult to interpret, even to the engineers who design them. This brilliant video by CGP Grey was originally made to illustrate why machine learning recommendation algorithms, such as on YouTube, were unpredictable (still are); and holds good for their spiritual successors, LLMs (in case you were wondering how this video on ChatGPT is 6 years old). You cannot expect reliable and repeatable results, you’ll likely get different results each time you hit refresh - some impressive, some bizarre.

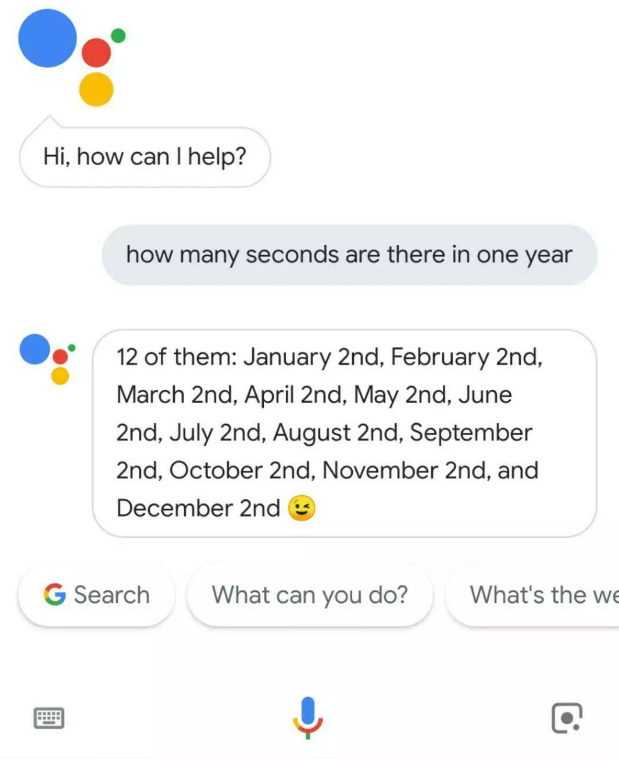

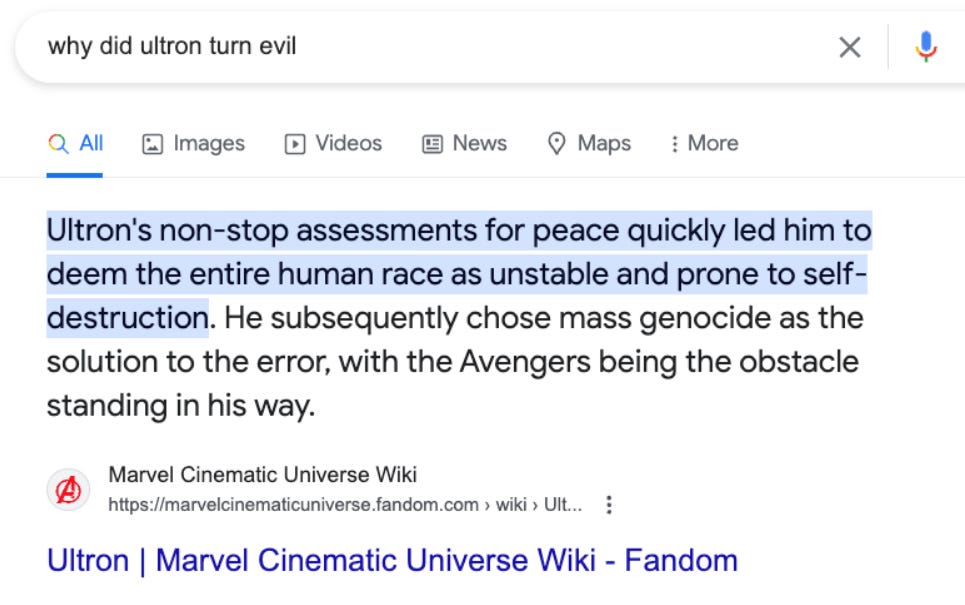

We cannot foresee how machines will follow our instructions, as this thread explains - from Google’s Gemini to Marvel’s Ultron, all the way back to the OG - I, Robot by Isaac Asimov. This is known as the 'AI alignment problem' - the difficulty of creating AI systems that reliably do what we want them to do. Misalignments between our instructions and the AI's behavior can manifest in ways ranging from unintentionally humorous (like the subreddit r/TechnicallyTheTruth, for instances where something is accurate but misses the point) to potentially dangerous (like the subreddit r/MaliciousCompliance, where rules are followed to a fault).

Every single comedic large-scale error by AI is evidence that when it is even more powerful and complex, the things it’ll do wrong will be utterly unpredictable and some of them will be very consequential.

In something as regulated and sensitive as medicine, we are likely decades away from AIs replacing doctors. It also applies to most high-level job with a lot on the line, where you cannot afford inexplicable mistakes at large scales.

Things are never going to be the same again though (then again, have they ever?). We will have 100x engineers, doctors, lawyers and everything in between; using AI to do more in less. Does this mean 100x lesser of these jobs? The jury is still out. Up until the 20th century, the vast majority of the population were farmers. Now, there are fewer (100x fewer?) farmers supporting populations several magnitudes higher with the help of sophisticated machines.

We have come a long way from “turbot ax” to GPT and the economic effects are undeniable. We are heading into a new economy, different from the one before and the others before that. With the advent of machines and computers, there was a scare that there would be no jobs in the future. Yet here we are, stronger than ever before.

Maybe the AI enabled future is similar to the one in Wall-E where there are no jobs; where humans don’t create, and only consume. But past technological revolutions suggest that while the nature of work may change, new opportunities also emerge. Until we get there, all we can do is keep moving onward and upward, strategically leveraging AI while staying mindful of its limitations and risks.